Algorithmic Bias, and Retaliation in the Workplace

When your employer uses algorithms, automated systems, or AI tools to make decisions about hiring, promotions, discipline, or layoffs, discrimination doesn’t disappear—it just gets harder to see. You have legal rights even when the bias is hidden behind technology.

If an algorithm flagged you for termination, productivity software scored you unfairly, or you faced retaliation after questioning automated decisions, you may have strong legal claims. New York employment law protects you from AI discrimination just as it protects you from human bias.

Contact us today for a confidential consultation about your whistleblower case.

What Is AI Discrimination and How Does It Happen?

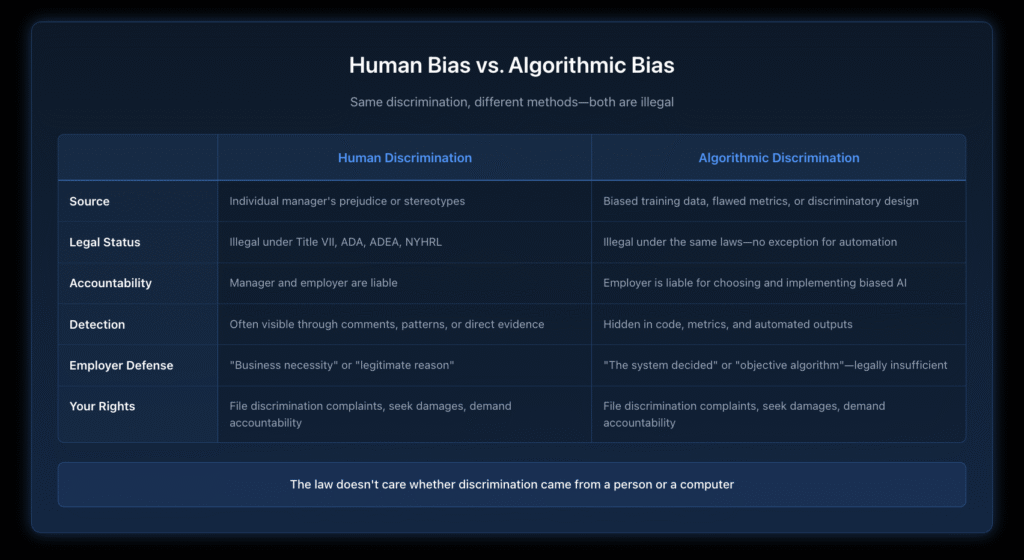

AI discrimination occurs when automated systems, algorithms, or artificial intelligence tools make employment decisions that harm you based on protected characteristics like age, race, gender, disability, or pregnancy status. The algorithm might not “intend” to discriminate, but the law doesn’t care about intent—it cares about impact.

Your employer chooses which AI tools to use. When those tools produce discriminatory results, your employer is legally accountable. Think of it this way: if a hiring manager discriminates, the company is liable. When an algorithm discriminates, the same principle applies.

How Do AI Tools Create Discrimination?

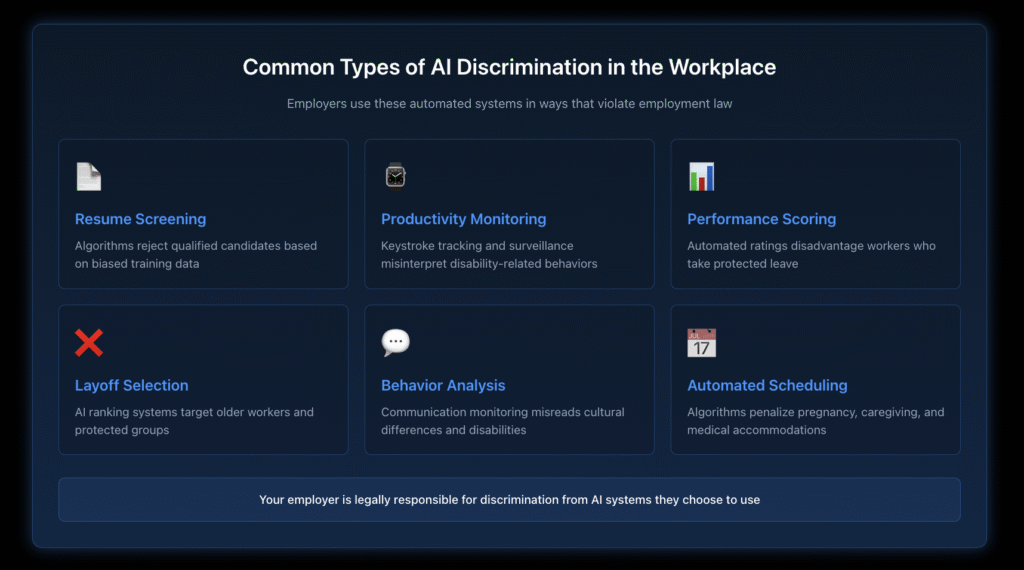

Modern employers increasingly rely on automated systems for workplace decisions. These tools often reflect the biases of their training data, design choices, or implementation. The EEOC has issued specific guidance on how these AI tools can create discrimination. Common discriminatory AI tools include:

Resume-scanning algorithms that automatically reject qualified candidates based on factors that correlate with protected characteristics. If an algorithm learned from a company’s past hiring patterns where management was predominantly male, it might downgrade resumes with indicators of being female.

Productivity monitoring software that tracks every keystroke, mouse movement, login time, and webcam activity. These tools frequently misinterpret disability-related behaviors, punish workers who take protected leave, or flag older workers as “less engaged” when they work differently than younger employees.

Automated performance scoring systems that generate ratings without human oversight. When these systems weigh metrics that disadvantage certain groups—like penalizing workers who take FMLA leave or rating employees poorly for working modified schedules as a disability accommodation—they violate employment law.

AI tools for identifying “low performers” during layoffs. Companies increasingly use algorithms to rank employees for termination. When these systems rely on biased metrics or lack proper validation, they can systematically target older workers, employees with disabilities, pregnant workers, or workers who’ve taken protected leave.

Automated coaching and behavior monitoring that misinterprets communication styles. AI tools analyzing emails, messages, or speech patterns often struggle with cultural differences, disabilities affecting communication, or non-native English speakers—creating discriminatory coaching or discipline.

Scheduling algorithms that penalize pregnancy, caregiving, or disabilities. Automated scheduling systems that dock workers for medical appointments, childcare needs, or accommodation-related time away from their desks often violate federal and New York law.

If you believe an AI system was used to discriminate against you, discipline you, or terminate your employment, contact us. You may have significant legal claims even when the decision came from an algorithm.

What Are the Warning Signs of AI Discrimination?

Employees often don’t realize they’re experiencing algorithmic bias until it’s too late. Watch for these red flags:

Sudden negative performance scores after new software was introduced, especially if your actual work quality hasn’t changed. If you were meeting expectations under human evaluation but started receiving automated write-ups or low ratings, the system may be discriminatory.

Being flagged as “inactive” or “unproductive” despite performing your work duties. Productivity monitoring tools frequently misinterpret disability-related breaks, different work patterns, or time spent on tasks the algorithm doesn’t recognize.

Told that “the system” or “the algorithm” selected you for termination, demotion, or investigation. When management hides behind automated decision-making, it’s often to avoid accountability for discriminatory choices. The law doesn’t allow employers to blame the computer.

AI misinterprets disability-related behaviors or time away from your desk. Automated monitoring often can’t distinguish between disability accommodations and poor performance. If you have a disability and the system flags you unfairly, that’s discrimination.

Disproportionate automated write-ups for older workers in your department. If employees over 40 receive automated discipline at higher rates than younger workers, that pattern can support an age discrimination claim.

Automated discipline right after protected activity, like requesting accommodations, reporting discrimination, taking FMLA leave, or filing a complaint. Retaliation doesn’t become legal just because an algorithm generates the paperwork.

Performance scores are dropping after a pregnancy announcement or medical leave. Algorithms that penalize workers for absence patterns without accounting for protected leave are discriminatory.

Different job duties as the employer implements automation. If you’re being pushed out or set up to fail as AI tools replace your work, you may be experiencing age discrimination or disability discrimination.

Is AI Discrimination Actually Illegal?

Yes. Absolutely. Discrimination doesn’t become legal because a computer did it instead of a person.

Your Employer Is Responsible for the AI Tools They Choose

When your employer selects and implements AI systems, they’re responsible for those systems’ outcomes. The law treats algorithmic discrimination exactly like human discrimination. If a manager can’t legally refuse to hire someone because they’re over 50, an AI system can’t do it either.

The employer controls the tool. Your company chose the vendor, configured the system, decided which metrics to prioritize, and determined how to use the results. Those choices carry legal responsibility.

Algorithms that disadvantage protected classes violate civil rights laws. Whether the discrimination comes from a hiring manager or a hiring algorithm, Title VII, the Americans with Disabilities Act, the Age Discrimination in Employment Act, and New York State and City Human Rights Laws all apply.

Retaliation for challenging biased AI is still retaliation. If you raise concerns about unfair automated systems and face punishment, that’s illegal retaliation. You’re protected when you push back on discriminatory tools.

Workers cannot be terminated based on data that disproportionately harms protected groups. Courts recognize “disparate impact” claims where facially neutral policies have discriminatory effects. AI systems that produce such effects are legally vulnerable.

You have whistleblower protections for reporting biased AI. If you report discriminatory AI systems to management, HR, government agencies, or even internally, retaliation against you is illegal under multiple New York laws, including Labor Law Sections 740 and 741.

Contact us at (212) 600-9534 to schedule a confidential consultation.

What About Retaliation for Questioning AI Systems?

Retaliation claims are increasingly tied to AI and automated workplace monitoring. Here’s what you need to know:

You’re protected when you complain about unfair automated performance metrics. If the AI scoring system seems biased and you raise concerns, your employer can’t punish you for speaking up.

Termination after raising concerns about biased algorithmic layoff selection can support a retaliation claim. When you point out that the AI unfairly targeted older workers, pregnant employees, or disabled workers, you’re engaging in protected activity.

Management can’t hide behind algorithms to justify retaliatory decisions. Even if they claim “the system generated the write-up,” if that write-up came immediately after you filed a discrimination complaint, it’s likely retaliation.

AI scoring that drops suddenly after protected activity, like accommodation requests, medical leave, discrimination complaints, or whistleblowing reports, often indicates retaliation. The timing matters more than the excuse.

Automated write-ups for “not meeting AI expectations” right after reporting misconduct are suspicious. Courts look at timing, not the employer’s stated reason.

Your employer doesn’t get to claim the decision was “neutral” or “objective” just because software made it. Retaliatory intent can be proven through timing, patterns, and the employer’s awareness of your protected activity.

How Do AI-Driven Layoffs Create Discrimination?

Algorithmic layoffs—also called automated workforce reductions or AI-driven RIFs (reductions in force)—are increasingly common. They’re also increasingly discriminatory.

How Companies Use AI to Select Employees for Layoffs

Employers rank workers using algorithms that score performance, productivity, attendance, or other metrics. The lowest-ranked employees get terminated. Sounds objective, right? It’s not.

The metrics are rarely neutral. Algorithms that penalize time away from desks harm workers with disabilities. Systems that downgrade employees who’ve taken leave discriminate against pregnant workers and those using FMLA. Tools that favor “fast” communication styles over thorough work disadvantage older employees.

Biased scoring can target specific groups. When layoff algorithms consistently rank older workers, pregnant employees, disabled workers, caregivers, or recent whistleblowers at the bottom, that’s discrimination.

The system can be manipulated by managers. Even “automated” decisions often allow human input. A manager with discriminatory intent can adjust an algorithm’s inputs to ensure specific workers receive low scores.

Demographic patterns reveal discrimination. If the workers selected for termination are disproportionately over 40, female, disabled, or from protected racial or ethnic groups, that disparity can support a discrimination claim even if each individual’s score looks “neutral.”

Lack of proper validation makes the system legally vulnerable. Employers are supposed to validate that their AI tools don’t have discriminatory effects. Most don’t. That failure creates legal liability.

Warning Signs of Discriminatory Algorithmic Layoffs

- Sudden scoring changes before the layoff announcement

- Unexplained metrics that weren’t previously used for evaluation

- Automated rankings that ignore actual work quality or client satisfaction

- Employees with strong performance histories are receiving low AI scores

- Protected groups terminated at higher rates than their proportion in the workforce

- No clear explanation of how the algorithm reached its conclusions

- Management hides behind “the system decided” without accountability

What Evidence Should You Collect?

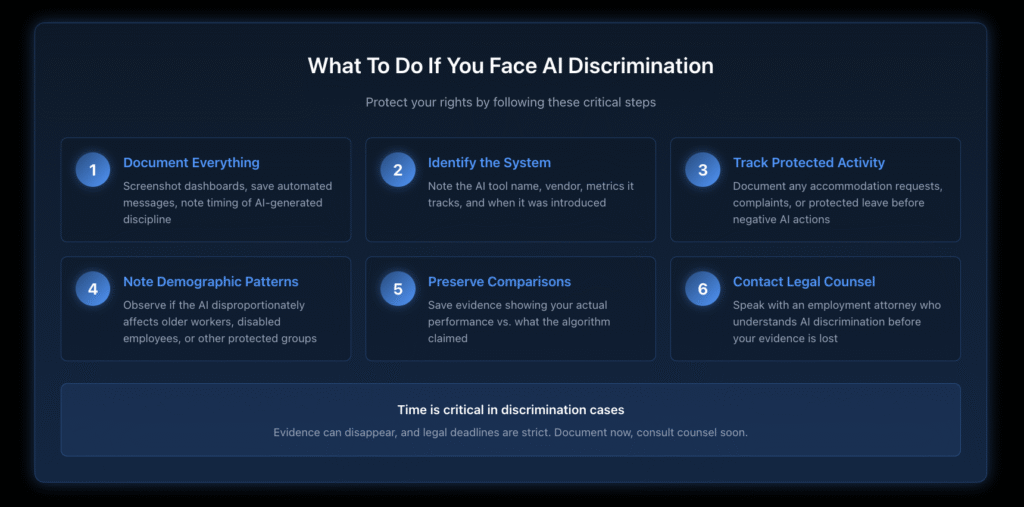

Strong documentation protects your legal rights. If you suspect AI discrimination, gather:

Screenshots of performance dashboards showing your scores, rankings, and any sudden changes after protected activity or the introduction of new systems.

Automated write-ups, coaching emails, or disciplinary notices generated by AI systems. Save everything, even if it seems minor.

Notes about when the AI tool was introduced and any changes to your treatment or evaluation afterward.

Details about the tool itself, including vendor name (Workday, Salesforce Einstein, Microsoft Viva, etc.), the metrics it tracks, and how your employer described it.

Information about other employees affected, including demographics, if you’re aware of patterns. Don’t share this with your employer, but noting who else was targeted can reveal discrimination patterns.

Messages from management about “system-generated decisions” or statements that “the algorithm” made choices. Employers shouldn’t hide behind automation.

Scheduling reports, attendance records, or productivity metrics created by algorithmic systems, especially if they penalize you for protected activities.

Documentation of protected activity like accommodation requests, discrimination complaints, FMLA leave, or whistleblowing reports—and the timing of any negative AI-generated evaluations that followed.

Comparisons between your AI scores and your actual performance, including client feedback, project completions, revenue generation, or quality metrics that the algorithm ignored.

Contact us today for a confidential consultation about your whistleblower case.

How Nisar Law Challenges AI Discrimination

We investigate and challenge algorithmic employment decisions using comprehensive legal and technical analysis.

Analyzing algorithmic scoring systems to identify discriminatory metrics and biased design choices.

Reviewing demographic impact data to prove disparate impact on protected classes.

Comparing pre-AI and post-AI job expectations to show how automation created discriminatory standards.

Investigating whether the AI was properly validated or if your employer skipped required bias testing.

Determining if the employer ignored human overrides or manipulated inputs to target specific workers.

Examining violations under Title VII, ADA, ADEA, FMLA, and New York laws, including NYHRL, NYCHRL, and Labor Law Sections 740 and 741.

Bringing whistleblower claims when you faced retaliation for challenging harmful AI systems.

Our approach combines employment law expertise with an understanding of how algorithmic systems actually work—not just how employers claim they work.

Contact Nisar Law About AI Discrimination

If you believe an automated system, algorithm, or AI tool was used to discipline, demote, or terminate you—or if you were retaliated against after raising concerns about biased AI—you may have strong legal claims.

Employers cannot hide discrimination behind algorithms. You have rights even when the decision came from a machine. The law holds companies responsible for the AI tools they choose and the discriminatory outcomes those tools produce.

Contact Nisar Law today for a consultation. We’ll analyze your situation, examine the AI systems involved, and help you understand your legal options.

Don’t let algorithmic discrimination go unchallenged. Your rights matter—whether the discrimination came from a person or a computer.

Types of AI Discrimination Cases We Handle?

- AI discrimination in hiring and recruiting

- Algorithmic bias against protected classes in performance evaluation

- AI-based performance reviews and scoring

- Automated write-ups, discipline, or termination

- AI-driven retaliation after discrimination complaints or whistleblowing

- Algorithmic layoff selection and AI-driven RIF discrimination

- AI surveillance and productivity monitoring violations

- AI-based attendance, punctuality, or availability scoring

- Discriminatory automated scheduling

- AI misinterpretation of disability-related behaviors or accommodations

- Algorithmic decision-making is tied to pregnancy discrimination

- Age discrimination in AI-driven workforce reductions

- AI retaliation against union activities or wage-hour complaints

- Automated systems targeting workers who've taken protected leave

Why We're the Right Choice

- Experienced Federal Employment Attorneys

- Personalized Service and Client-Focused Approach

- Proven Track Record of Success

- Nationwide Representation for Federal Employees

- In-depth Knowledge of Federal Agency Procedures

Frequently Asked Questions:

Algorithmic bias occurs when automated systems produce unfair outcomes that systematically disadvantage certain groups. In employment, this means AI tools that make decisions about hiring, promotion, discipline, or termination in ways that violate civil rights laws. For example, a resume-scanning algorithm trained on a company’s historically male workforce might downgrade female applicants. AI discrimination becomes illegal when it harms workers based on protected characteristics like age, race, gender, disability, or other categories covered by employment law.

AI-driven layoffs are only legal if they don’t discriminate. When companies use algorithms to select employees for termination, those systems must not disproportionately harm protected groups. If an automated layoff selection tool consistently ranks older workers, pregnant employees, disabled workers, or recent discrimination complainants as “low performers,” that creates legal liability. The fact that an algorithm made the selection doesn’t shield the employer from responsibility. Courts can examine whether the AI system had a discriminatory impact, whether it was properly validated, and whether the employer knew or should have known about bias in the system.

A productivity monitoring system flags a worker with diabetes as “inactive” because she takes protected medical breaks throughout the day. Despite her strong work output, the AI ranks her as a low performer based on time away from her keyboard. When layoffs occur, the algorithm selects her for termination. That’s AI discrimination—the automated system penalized her disability-related behavior in violation of the ADA. Another example: an AI hiring tool systematically rejects older applicants because it learned to prefer candidates who use specific slang or social media platforms popular with younger workers. This creates age discrimination even though the algorithm never explicitly considered age.

In employment contexts, the three main types of AI bias are: (1) Data bias, where the training data reflects historical discrimination—if an AI learns from a company where management was predominantly white and male, it may perpetuate those patterns; (2) Algorithmic bias, where the system’s design choices create discriminatory outcomes—like weighting metrics that disadvantage workers with disabilities; and (3) Interaction bias, where the way humans use the AI system introduces discrimination—such as managers manipulating inputs to target specific workers. All three types can violate employment discrimination laws.

Discriminative AI in employment might be an automated performance evaluation system that scores workers who take FMLA leave as less productive, even though their leave is legally protected. Or consider an AI coaching tool that analyzes email communication and flags non-native English speakers for “poor communication skills” based on language differences rather than actual job performance. Another example is facial recognition software used for timekeeping that performs poorly on darker skin tones, causing Black and Latino workers to receive automated attendance violations they didn’t deserve. Each of these systems discriminates based on protected characteristics.

While companies often cite business necessity, many tech layoffs involve discriminatory algorithmic selection systems. The “real reason” often includes: companies using AI to identify workers they perceive as expensive (often older employees), automated systems that rank employees taking protected leave as low performers, algorithms that target workers who’ve complained about discrimination or harassment, and RIF selection tools that disproportionately affect specific demographic groups. If your tech employer used an AI system to select you for layoff and you’re in a protected class or engaged in protected activity, there may be a discrimination component regardless of the company’s public explanation.

The five types commonly discussed in AI discrimination cases are: (1) Selection bias where training data doesn’t represent the actual workforce; (2) Measurement bias where the system measures the wrong things or measures them inaccurately for certain groups; (3) Confirmation bias where the algorithm reinforces existing stereotypes; (4) Exclusion bias where the system excludes important variables that would reveal discrimination; and (5) Label bias where the classifications used to train the AI reflect discriminatory assumptions. In employment law, any of these can create violations of Title VII, the ADA, ADEA, or New York anti-discrimination laws when they result in adverse employment actions against protected groups.